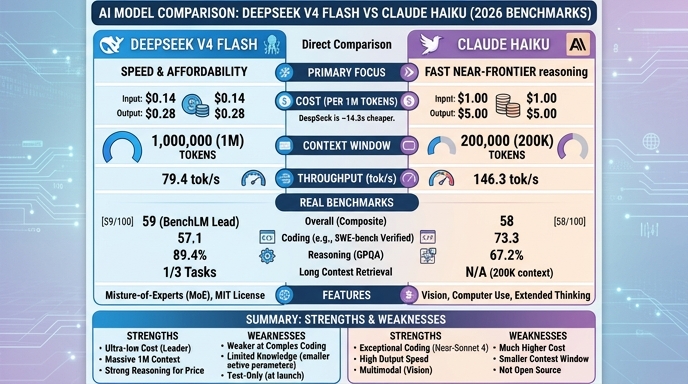

Choosing the right model for software development tasks often comes down to balancing latency and cost against reasoning capability. DeepSeek V4 Flash and Claude 4.5 Haiku both position themselves as highly efficient, low-latency models tailored for high-volume production environments, sub-agent workflows, and real-time developer tooling. Both models offer massive context windows, making them suitable for codebase-aware tasks.

DeepSeek V4 Flash leverages a Mixture-of-Experts (MoE) architecture designed for extreme cost efficiency and speed, providing an open-weight alternative for teams that prioritize self-hosting or reducing dependency on proprietary APIs. Conversely, Claude 4.5 Haiku benefits from Anthropic’s focused training on long-context reliability and advanced tool-use capabilities, often acting as a high-performance, drop-in replacement for more expensive flagship models in latency-sensitive applications.

Visual comparison

Click to view full size

Benchmark scores

Higher is better

Strengths and weaknesses

When to use each model

Choose DeepSeek V4 Flash when you are building cost-sensitive, high-volume production systems where you need full control over the model weights or want to deploy in air-gapped, private infrastructure. It is particularly effective for teams that need to process massive amounts of data (such as full repository scans or log analysis) within a single 1M-token context window without incurring the high per-token costs typical of proprietary frontier models.

Choose Claude 4.5 Haiku for latency-sensitive applications like real-time coding assistants, IDE plugins, or interactive UI components where response time is the primary user-experience driver. It excels in workflows requiring reliable, autonomous tool use and high-fidelity code generation, making it the ideal choice for developers who want a 'set-and-forget' managed API that consistently outperforms others in complex, agent-driven engineering tasks.

Ready to build?

Try both models on Select

One API key. Intelligent routing. DeepSeek V4 Flash and Claude Haiku available now.

Open Select →Pay as you go. No subscription required.