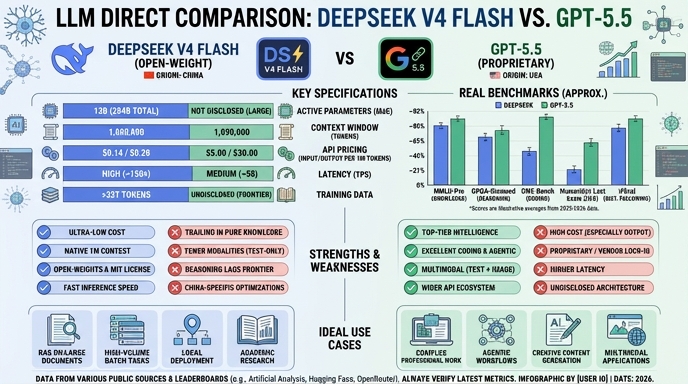

The release of DeepSeek V4 Flash and OpenAI's GPT-5.5 marks a significant shift in the 2026 LLM landscape, presenting developers with a clear choice between extreme cost-efficiency and high-stakes agentic performance. DeepSeek V4 Flash, an open-weights Mixture-of-Experts model, prioritizes throughput and accessibility, offering a 1-million-token context window that competes with frontier models at a fraction of the operational cost. It is designed specifically for high-volume, latency-sensitive pipelines where budget is a primary constraint.

GPT-5.5, by contrast, represents the current pinnacle of proprietary, closed-source agentic capabilities. Built for complex, multi-step workflows and deep integration within established ecosystems like GitHub Copilot and the OpenAI API, it excels in tasks requiring high-level reasoning and nuanced tool coordination. For developers, the decision hinges on whether the application requires the specialized reliability and safety-hardened architecture of a flagship frontier model or the aggressive, scalable economics of an open-weights deployment.

Visual comparison

Click to view full size

Benchmark scores

Higher is better

Strengths and weaknesses

When to use each model

Choose DeepSeek V4 Flash for high-volume, cost-sensitive production applications where you need to maximize ROI. It is ideal for internal data processing pipelines, automated support systems, and high-frequency code generation tasks where the ability to self-host or use cheap API credits outweighs the need for peak-frontier reasoning. It is particularly effective when you have large datasets that require a long context window without the massive overhead associated with proprietary frontier models.

Choose GPT-5.5 for mission-critical, agentic systems that require the highest possible success rate in complex, multi-step problem solving. It is the optimal choice for building sophisticated AI agents that must coordinate tools, interact with complex codebases, and maintain reliability in professional environments. Use this model when the cost of a failed task is high and where the advanced reasoning, ecosystem integration, and robust safety guardrails provided by OpenAI are necessary for your product's success.

Ready to build?

Try both models on Select

One API key. Intelligent routing. DeepSeek V4 Flash and GPT-5.5 available now.

Open Select →Pay as you go. No subscription required.