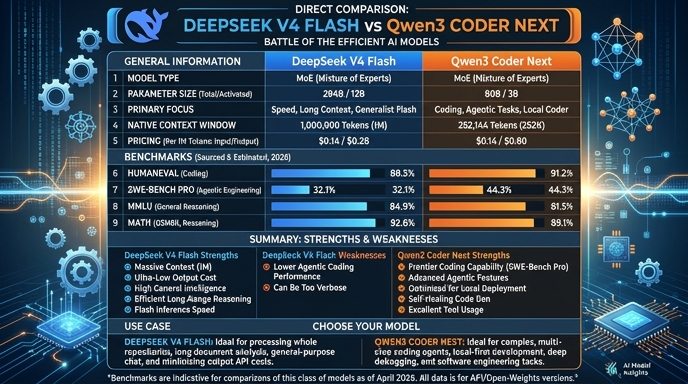

DeepSeek V4 Flash and Qwen3 Coder Next represent two distinct approaches to efficient, high-performance AI deployment for software engineering. DeepSeek V4 Flash, released in April 2026, utilizes a large 284B MoE parameter count with 13B active parameters to deliver near-frontier reasoning and coding capabilities. It is built for production environments requiring substantial context handling (1M tokens) and economical inference.

Qwen3 Coder Next, released in February 2026, focuses on agentic optimization and local development. With an 80B MoE architecture (3B active parameters), it is engineered for lower hardware footprint and rapid local inference while maintaining competitive coding performance. Developers must weigh DeepSeek's superior context window and reasoning benchmarks against Qwen3's specialized, agent-ready training and accessibility for local-first workflows.

Visual comparison

Click to view full size

Benchmark scores

Higher is better

Strengths and weaknesses

When to use each model

Choose DeepSeek V4 Flash when your application requires processing large codebases, long documentation, or complex agent workflows that demand extensive context. It is the superior choice for cloud-based production environments where throughput, reasoning depth, and cost efficiency are the primary drivers for high-scale, document-heavy operations.

Choose Qwen3 Coder Next for local development environments, offline AI coding assistants, and rapid prototyping on hardware with limited VRAM. It is best suited for scenarios where data privacy is paramount, low-latency responses are required for individual dev-agent interactions, and you need a specialized model for iterative execution and local debugging.

Ready to build?

Try both models on Select

One API key. Intelligent routing. DeepSeek V4 Flash and Qwen3 Coder Next available now.

Open Select →Pay as you go. No subscription required.