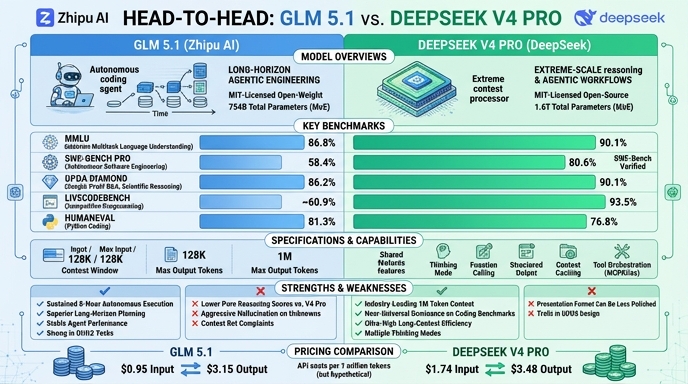

The 2026 landscape for open-weight frontier models has shifted significantly with the arrival of GLM 5.1 and DeepSeek V4 Pro. GLM 5.1, developed by Z.ai, positions itself as a specialized powerhouse for complex software engineering and long-horizon agentic tasks, boasting a 754B parameter architecture that emphasizes deep reasoning and autonomous debugging. It has gained substantial traction for its MIT-licensed, self-hostable profile and high performance on real-world coding benchmarks.

DeepSeek V4 Pro enters the market as a massive 1.6-trillion parameter Mixture-of-Experts model, focusing on extreme cost-efficiency and a native 1 million token context window. Designed by DeepSeek to compete directly with closed-source frontier systems, it utilizes a hybrid thinking/non-thinking architecture that enables rapid inference at a lower price point. For developers, the choice between these two often comes down to specific infrastructure needs: GLM 5.1’s specialization in agentic coding versus DeepSeek V4 Pro’s broader utility and massive context capacity.

Visual comparison

Click to view full size

Benchmark scores

Higher is better

Strengths and weaknesses

When to use each model

Choose GLM 5.1 when your primary objective is autonomous software engineering. If your workflow involves complex, multi-step debugging, long-horizon coding tasks, or agentic systems that require deep logical planning, GLM 5.1’s architecture is specifically optimized for these high-complexity software engineering requirements, often outperforming frontier models in specific coding-centric test suites.

Choose DeepSeek V4 Pro when you require a high-context, cost-effective solution for general-purpose AI agent workloads. It is the superior choice for applications needing to ingest massive amounts of data—such as full repositories or extensive documentation—simultaneously. Its hybrid reasoning modes make it versatile enough to handle both simple queries and complex analysis at a fraction of the cost of standard proprietary models.

Ready to build?

Try both models on Select

One API key. Intelligent routing. GLM 5.1 and DeepSeek V4 Pro available now.

Open Select →Pay as you go. No subscription required.