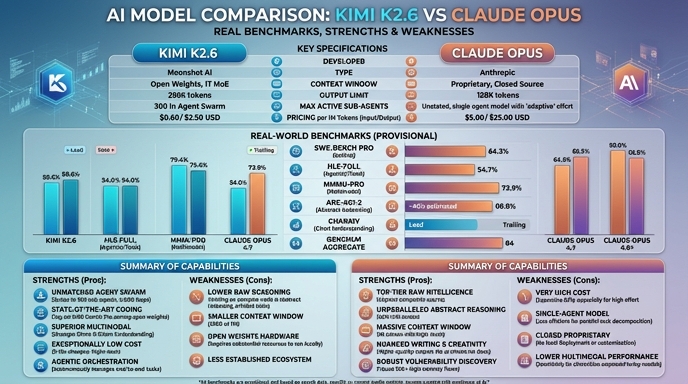

For software developers assessing modern LLM capabilities, the choice between Kimi K2.6 and Claude Opus represents a decision between a purpose-built, open-weight agentic engine and a high-reasoning proprietary frontier model. Kimi K2.6 leverages a 1-trillion parameter Mixture-of-Experts (MoE) architecture specifically optimized for long-horizon autonomous workflows, featuring a unique "Agent Swarm" capability that orchestrates up to 300 sub-agents for complex codebases. It is designed for developers who require fine-grained control over the serving stack, lower inference costs, and the ability to self-host for compliance or customization.

Conversely, Claude Opus (specifically the 4.7 series) serves as a closed-source benchmark for raw reasoning depth, reliability, and large-scale context management. With a 1-million token context window, it excels in tasks involving massive codebase analysis, high-stakes decision-making, and production environments where latency and API maturity are prioritized over local customization. While Kimi K2.6 challenges the frontier on specific coding tasks, Claude Opus remains the incumbent for reliability and generalized reasoning tasks where proprietary optimization is non-negotiable.

Visual comparison

Click to view full size

Benchmark scores

Higher is better

Strengths and weaknesses

When to use each model

Choose Kimi K2.6 when building custom autonomous coding agents or complex CI/CD orchestration layers where you need to control the underlying model weights, optimize for cost, or implement specialized agentic swarms. It is the ideal choice for teams running massive, repetitive agent workflows (such as 24/7 automated refactoring or bug fixing) that benefit from self-hosted performance and lower latency.

Choose Claude Opus for high-stakes production environments, complex system architecture design, or projects requiring the analysis of massive documentation suites that exceed 300K tokens. It is the superior choice for organizations that prioritize model reliability, consistency in reasoning, and ready-to-use API availability over the overhead of maintaining local infrastructure.

Ready to build?

Try both models on Select

One API key. Intelligent routing. Kimi K2.6 and Claude Opus available now.

Open Select →Pay as you go. No subscription required.