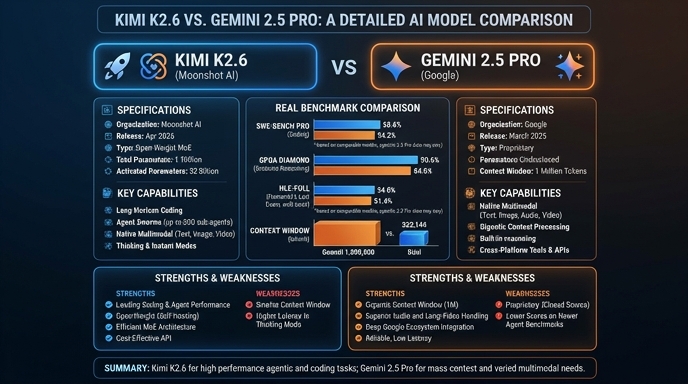

Kimi K2.6 and Gemini 2.5 Pro represent two distinct philosophies in the current frontier model landscape. Gemini 2.5 Pro is a natively multimodal, reasoning-focused model designed for extensive context windows, making it highly effective for analyzing large codebases and complex multi-step reasoning tasks. Its performance is deeply integrated into Google’s ecosystem, offering robust support for video, audio, and large-scale data analysis.

In contrast, Kimi K2.6 is a 1-trillion parameter, open-weight Mixture-of-Experts (MoE) model architected specifically for agentic workflows and long-horizon coding tasks. Its "Agent Swarm" capabilities allow it to orchestrate hundreds of sub-agents autonomously over 12+ hour execution windows. For developers, this creates a split in use cases: Gemini 2.5 Pro excels as a high-reasoning, closed-source utility for broad data synthesis, while Kimi K2.6 serves as a specialized, self-hostable engine for autonomous software engineering and multi-agent coordination.

Visual comparison

Click to view full size

Benchmark scores

Higher is better

Strengths and weaknesses

When to use each model

Choose Kimi K2.6 if you are building autonomous agent pipelines, require data sovereignty via self-hosting, or need to orchestrate complex, multi-day development workflows where the model must manage sub-agents, handle tool calls, and rewrite core system topologies without constant human intervention. It is the preferred choice for teams that need to integrate a frontier-grade coding model directly into their local infrastructure.

Choose Gemini 2.5 Pro for tasks requiring deep, multi-source analysis where context length is the primary constraint. It excels at synthesizing insights from massive datasets—such as multi-hour video walkthroughs, comprehensive technical documentation, or entire multi-language repositories—where its 1M+ token window provides superior oversight. It is the ideal utility for complex reasoning across diverse modalities within the Google Cloud ecosystem.

Ready to build?

Try both models on Select

One API key. Intelligent routing. Kimi K2.6 and Gemini 2.5 Pro available now.

Open Select →Pay as you go. No subscription required.