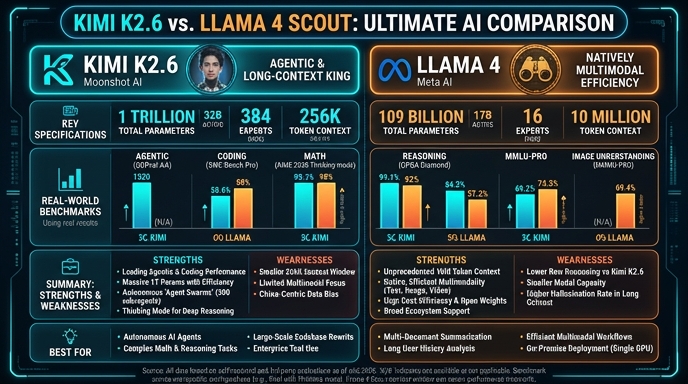

Kimi K2.6 and Llama 4 Scout represent significant, distinct advancements in the open-weights AI ecosystem, each optimized for different developer priorities. Kimi K2.6, developed by Moonshot AI, utilizes a 1T total parameter MoE architecture specifically tuned for long-horizon autonomous coding and agentic swarm workflows. It is built to minimize infrastructure overhead while providing high-performance code generation and multi-step tool orchestration, making it a strong candidate for backend automation and complex software engineering tasks.

Meta's Llama 4 Scout, conversely, is engineered as a general-purpose, natively multimodal powerhouse. With its 109B parameter MoE design and an industry-leading 10M token context window, it excels in tasks requiring the synthesis of massive datasets, document analysis, and sophisticated vision-language integration. For developers, Llama 4 Scout offers superior flexibility in handling diverse media types and extended long-context workflows, whereas Kimi K2.6 is generally preferred for specialized agentic coding and domain-specific agent swarm execution.

Visual comparison

Click to view full size

Benchmark scores

Higher is better

Strengths and weaknesses

When to use each model

Choose Kimi K2.6 when building autonomous software agents, IDE plugins, or coding-driven automation workflows that require deep, long-horizon reasoning and frequent tool interaction. It is the superior choice for developers who need specialized agent swarm orchestration where multiple sub-agents must coordinate to complete complex, end-to-end full-stack software development tasks efficiently and reliably.

Choose Llama 4 Scout when your application demands native multimodal processing, such as analyzing large-scale document repositories, video content, or complex graphical layouts alongside text. Its massive context window and general-purpose capabilities make it ideal for building persistent assistants that require long-term memory, cross-domain knowledge synthesis, and advanced RAG systems that ingest vast amounts of heterogeneous data.

Ready to build?

Try both models on Select

One API key. Intelligent routing. Kimi K2.6 and Llama 4 Scout available now.

Open Select →Pay as you go. No subscription required.