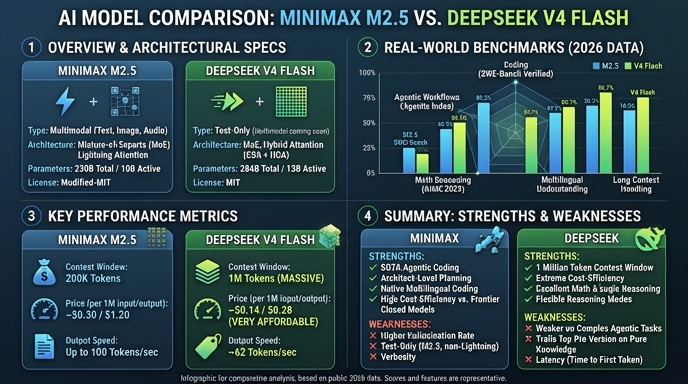

MiniMax M2.5 and DeepSeek V4 Flash represent two distinct approaches to efficient large language model serving as of mid-2026. MiniMax M2.5 focuses on agentic productivity and software engineering, leveraging a specialized Mixture-of-Experts (MoE) architecture designed for complex task decomposition, file manipulation, and high-fidelity tool usage in enterprise environments. Its training is heavily optimized for agentic loops, prioritizing successful task completion over raw token generation speed.

DeepSeek V4 Flash, conversely, prioritizes high-throughput serving and cost-efficiency for long-context applications. Architected for massive scale with a 1M-token window, it excels in scenarios requiring rapid inference and low-latency interaction. While M2.5 is tuned for deep, multi-turn software development and complex reasoning, DeepSeek V4 Flash is engineered as a high-performance engine for applications that demand high volume, speed, and cost-effective utilization of large context windows.

Visual comparison

Click to view full size

Benchmark scores

Higher is better

Strengths and weaknesses

When to use each model

Choose MiniMax M2.5 when building autonomous software engineering agents, complex data analysis pipelines, or automated office productivity tools. Its architecture is specifically optimized for tasks that require heavy function calling, high reasoning maturity, and the ability to operate across diverse file types and environments, making it ideal for systems that act as 'co-pilots' rather than just chat interfaces.

Choose DeepSeek V4 Flash when your primary requirements are low-latency serving, high-throughput document processing, or building cost-sensitive conversational interfaces. It is the superior choice for RAG (Retrieval-Augmented Generation) applications, large-scale summarization of high-context inputs, and any high-traffic environment where balancing speed and operational costs is critical.

Ready to build?

Try both models on Select

One API key. Intelligent routing. MiniMax M2.5 and DeepSeek V4 Flash available now.

Open Select →Pay as you go. No subscription required.