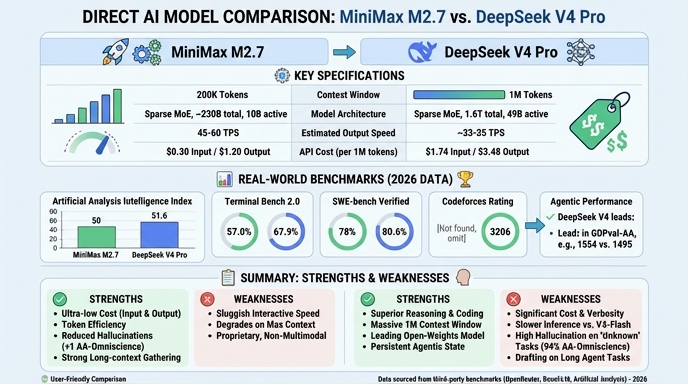

MiniMax M2.7 and DeepSeek V4 Pro represent two distinct approaches to high-performance AI deployment in 2026. MiniMax M2.7, built as a self-evolving agent-centric model, emphasizes specialized productivity workflows and multi-agent system orchestration, making it highly effective for complex, recursive software engineering and document-heavy corporate environments. Its architectural design prioritizes autonomous task completion and the capability to manage intricate project lifecycles.

DeepSeek V4 Pro, by contrast, is a massive 1.6T parameter Mixture-of-Experts (MoE) model engineered for deep reasoning and broad-scale coding utility. It is optimized for high-throughput, latency-sensitive applications where near-frontier performance is required without the premium cost of closed-source models. For developers, the choice between them rests on whether the priority is specialized, agentic autonomous task execution (MiniMax) or high-volume, general-purpose reasoning and coding performance (DeepSeek).

Visual comparison

Click to view full size

Benchmark scores

Higher is better

Strengths and weaknesses

When to use each model

Choose MiniMax M2.7 when your primary objective is building autonomous agent teams for specialized professional workflows. It excels in environments where the model must manage complex file formats, maintain state across multi-step project lifecycles, and handle recursive tasks that require 'self-correction' or internal memory optimization. It is particularly effective for SRE-level root cause analysis and automated document engineering where specific tool compliance is critical.

Choose DeepSeek V4 Pro for large-scale software engineering, codebase analysis, and general deep reasoning applications. Its massive parameter scale and high performance on coding leaderboards make it ideal for developers needing a robust, cost-effective substitute for frontier models in code generation, bug fixing, and repository-wide refactoring. Its long-context capacity and open-weights nature offer significant flexibility for integrating it into custom, latency-sensitive production pipelines.

Ready to build?

Try both models on Select

One API key. Intelligent routing. MiniMax M2.7 and DeepSeek V4 Pro available now.

Open Select →Pay as you go. No subscription required.