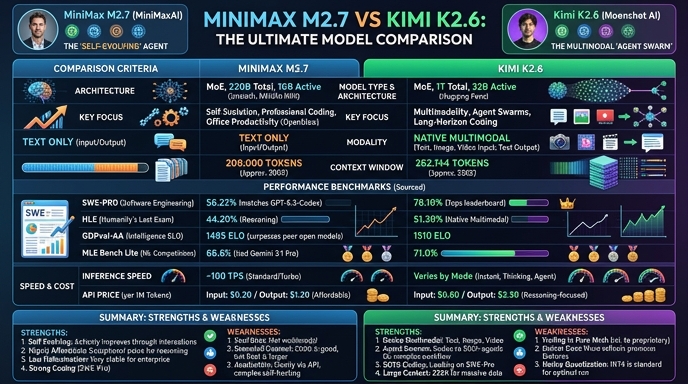

MiniMax M2.7 and Kimi K2.6 represent the current frontier of specialized open-weight models designed for complex engineering and agentic workflows. MiniMax M2.7 differentiates itself through a recursive self-evolution architecture, prioritizing deep system-level reasoning and high-fidelity project delivery. It excels in tasks requiring extensive context gathering, such as refactoring large codebases or troubleshooting complex SRE-level production incidents.

Kimi K2.6, conversely, focuses on high-throughput agent swarms and multi-step tool orchestration. It is built to operate autonomously across long-horizon tasks, utilizing a massive 1-trillion parameter MoE architecture that makes it particularly effective for front-end development, DevOps automation, and parallel task execution. Developers choosing between these two must weigh MiniMax's deep reasoning strengths against Kimi's superior agent swarm and tool-use scalability.

Visual comparison

Click to view full size

Benchmark scores

Higher is better

Strengths and weaknesses

When to use each model

Choose MiniMax M2.7 when your primary development need involves deep engineering tasks that require a single, highly capable engine. It is ideal for complex refactoring, root-cause analysis in distributed systems, and scenarios where the model must deeply understand the operational logic and collaborative dynamics of an entire code repository. If your workflow relies on a model that needs to 'think' deeply before proposing significant architectural changes, M2.7 provides the necessary reasoning depth.

Choose Kimi K2.6 when building autonomous agentic workflows that require massive parallelization and persistent operation. It is the superior choice for agent swarms that need to decompose large, ambiguous requirements into hundreds of specialized subtasks, such as end-to-end web development, automated DevOps pipelines, or large-scale data analysis tasks that benefit from horizontal scaling. K2.6 excels in production environments where the model acts as a background agent orchestrating operations 24/7.

Ready to build?

Try both models on Select

One API key. Intelligent routing. MiniMax M2.7 and Kimi K2.6 available now.

Open Select →Pay as you go. No subscription required.