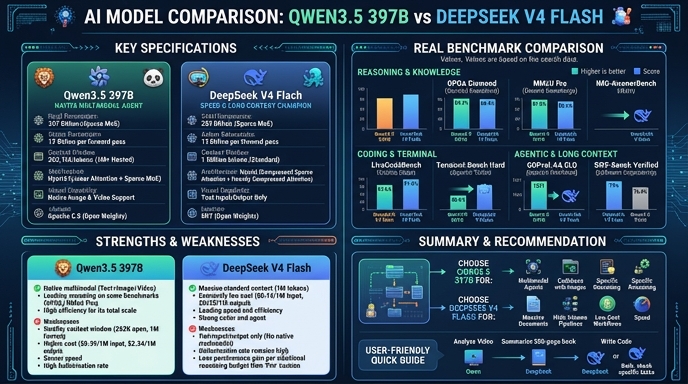

Qwen3.5 397B and DeepSeek V4 Flash represent two distinct approaches to high-performance AI deployment in 2026. Qwen3.5 397B, developed by Alibaba, utilizes a 397B parameter Mixture-of-Experts (MoE) architecture with 17B active parameters, positioning it as a dense-like performer suitable for complex agentic workflows and scientific reasoning. It is designed to balance the depth of a massive model with the efficiency required for professional-grade application development, particularly where instruction following and reasoning reliability are paramount.

DeepSeek V4 Flash, conversely, is engineered as a highly optimized, efficiency-focused MoE model with 284B total parameters and 13B active. Released by DeepSeek, it emphasizes throughput, low-latency inference, and cost-efficiency without sacrificing near-frontier reasoning capabilities. For developers, the choice between these two often comes down to specific infrastructure needs: Qwen3.5 397B for tasks demanding maximum reasoning depth and multi-modal nuance, versus DeepSeek V4 Flash for high-volume, cost-sensitive production environments that require rapid response times.

Visual comparison

Click to view full size

Benchmark scores

Higher is better

Strengths and weaknesses

When to use each model

Choose Qwen3.5 397B when your application demands the highest possible reasoning accuracy and multimodal understanding, particularly in scenarios such as scientific research assistants, complex code analysis pipelines, or advanced agentic systems that require deep logical chaining. It is the optimal choice for projects where the quality and precision of the response take precedence over infrastructure cost, or where multi-step logical deduction is a core feature of the product.

Choose DeepSeek V4 Flash for production environments where cost-efficiency and high throughput are the primary constraints. It is ideally suited for real-time customer support agents, high-volume data processing tasks, and any workflow where you need to process large amounts of data quickly without the expense of running a frontier-scale model. Its optimized architecture makes it the superior choice for scaling AI features across large user bases while maintaining strict latency requirements.

Ready to build?

Try both models on Select

One API key. Intelligent routing. Qwen3.5 397B and DeepSeek V4 Flash available now.

Open Select →Pay as you go. No subscription required.